|

In some Hadoop distributions, Flume can be started as a service when the system boots, such as “service start flume.” This configuration allows for automatic use of the Flume agent.

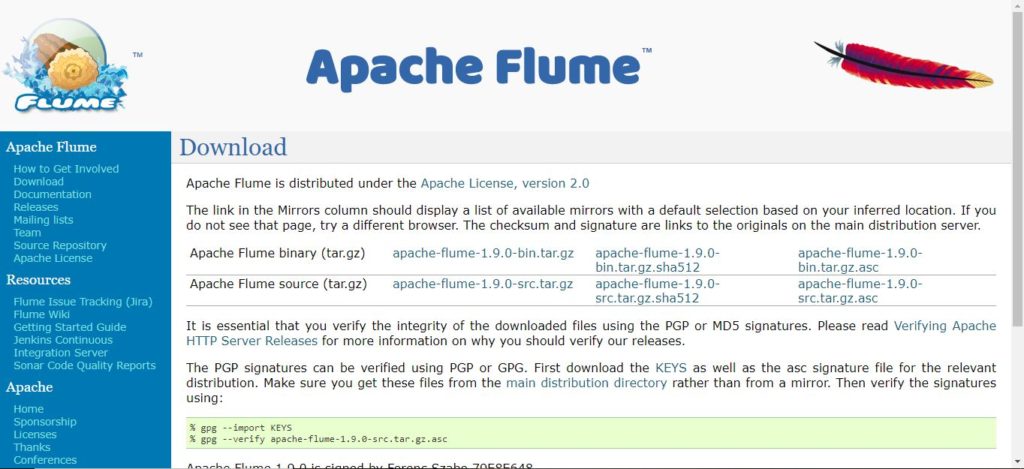

This agent writes the data into HDFS and should be started before the source agent. $ flume-ng agent -c conf -f nf -n collector Now that the data directories are created, the Flume target agent can be started (as user hdfs). $ hdfs dfs -mkdir /user/hdfs/flume-channel/ Next, as user hdfs, make a Flume data directory in HDFS. To run the example, create the directory as root. The web log is also mirrored on the local file system by the agent that writes to HDFS. nf-The source Flume agent that captures the web log data nf-The target Flume agent that writes the data to HDFS (See the sidebar “Flume Configuration Files.”) The full source code and further implementation notes are available from the book web page in Appendix A, “Book Web Page and Code Download.” Two files are needed to configure Flume. This example is easily modified to use other web logs from different machines. In this example web logs from the local machine will be placed into HDFS using Flume. For more information and example configurations, please see the Flume Users Guide at. The full scope of Flume functionality is beyond the scope of this book, and there are many additional features in Flume such as plug-ins and interceptors that can enhance Flume pipelines. There are many possible ways to construct Flume transport networks. Sink-The sink delivers data to destinations such as HDFS, a local file, or another Flume agent.įigure 4.6 A Flume consolidation network. It can be thought of as a buffer that manages input (source) and output (sink) flow rates. web log) or another Flume agent.Ĭhannel-A channel is a data queue that forwards the source data to the sink destination. The input data can be from a real-time source (e.g. It can send the data to more than one channel. Source-The source component receives data and sends it to a channel. Flume is often used for log files, social-media-generated data, email messages, and pretty much any continuous data source.Īs shown in Figure 4.4, a Flume agent is composed of three components: Often data transport involves a number of Flume agents that may traverse a series of machines and locations. In order to use this type of data for data science with Hadoop, we need a way to ingest such data into HDFS.Īpache Flume is designed to collect, transport, and store data streams into HDFS. In addition to structured data in databases, another common source of data is log files, which usually come in the form of continuous (streaming) incremental files often from multiple source machines. Learn More Buy Using Apache Flume to Acquire Data Streams Here we are using UUID as a row key generator for the primary key.Practical Data Science with Hadoop and Spark: Designing and Building Effective Analytics at Scale This should be configured in cases where we need a custom row key value to be auto generated and set for the primary key column.įor an example configuration for ingesting Apache access logs onto Phoenix, see this property file. Can be one of timestamp,date,uuid,random and nanotimestamp. The data type for these columns are VARCHAR by default.Ī custom row key generator. Headers of the Flume Events that go as part of the UPSERT query. The columns that will be extracted from the Flume event for inserting into HBase. The regular expression for parsing the event.

Recommended to include the IF NOT EXISTS clause in the ddl.Įvent serializers for processing the Flume Event.

If specified, the query will be executed. The CREATE TABLE query for the HBase table where the events will be upserted to. The name of the table in HBase to write to.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed